Simple A/B Testing

Monday, December 6th, 2010

A few months ago we changed the resources index on www.pcc.edu. Rather than one long list, visitors are initially presented with the student resources. An interface at the top allows you to switch to faculty, or a list of all the resources.

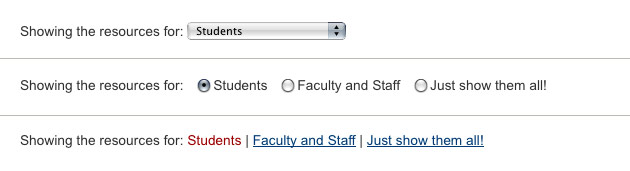

We included a hash feature, so that faculty could link directly to the alternative lists. However, there were still some concerns about users not noticing the interface the first time. The original interface was a select list (or drop-down list). We decided to do some quick A/B testing on two alternatives: radio buttons and plain links:

From Wednesday night to Monday morning (a lower traffic time), visitors were randomly presented with one of the three interfaces. If the interface was used, the time was recorded. Only the first change was recorded.

The surprising data

This very simple test produced some interesting results:

| Interface | Use Count | Average Time | Median Time |

|---|---|---|---|

| Select List | 125 | 26.74 sec | 7.02 sec |

| Radio Buttons | 1612 | 66.59 sec | 4.45 sec |

| Links (current in red) | 156 | 100.45 sec | 7.37 sec |

Here is the raw data: Select, Radio, Link

The Results

The numbers clearly show that the radio button interface was 10x more popular then the others. It also takes considerably less time to use (according to the median).

It is amazing how much of a difference a small change can make.

Notes

You should notice a huge gap in average vs. median time. The test worked by starting a clock when the page is loaded. When the list is changed, the amount of time that has lapsed is recorded. It seems, that in a few cases, someone opened the page – only to return hours later. The longest time was about 22hours. This really screwed with the averages.

In addition … At first, I was so surprised by the results that I thought my test was broken. To make sure that the 3 interfaces were showing at random, I started tracking interface views – regardless of use. Here are the respective numbers from Friday to Monday: 2222, 2252, 2171